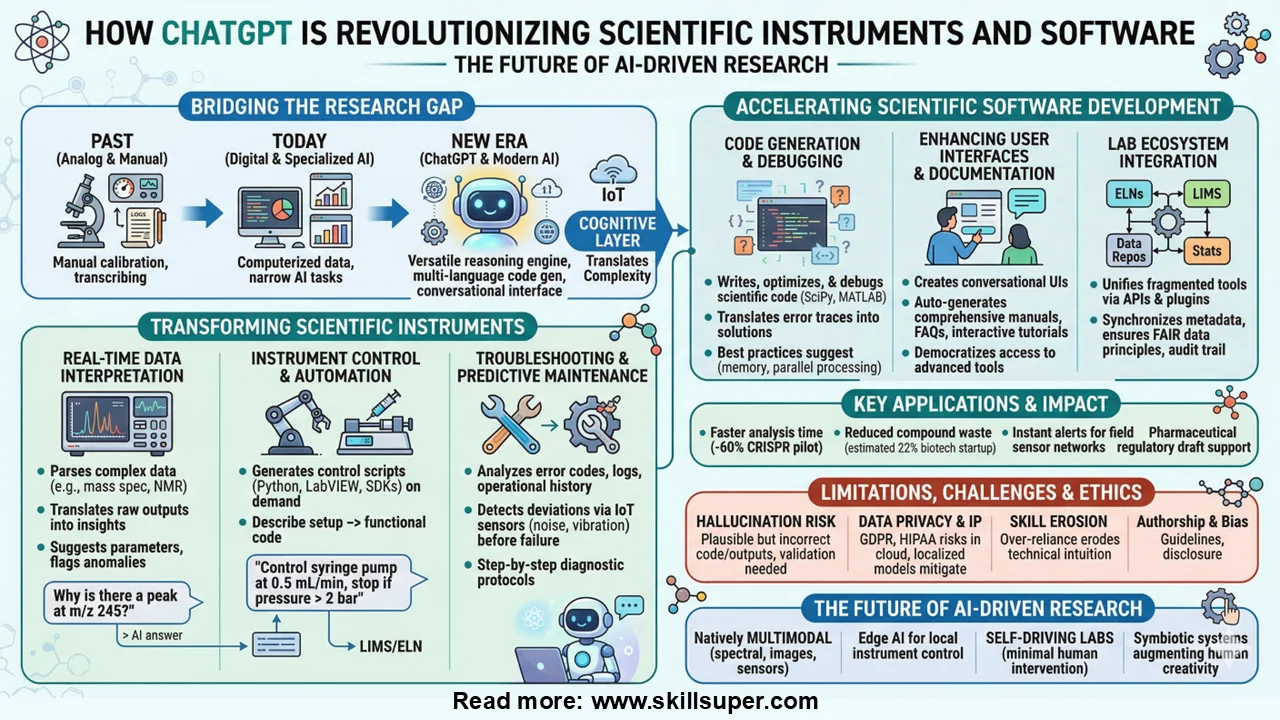

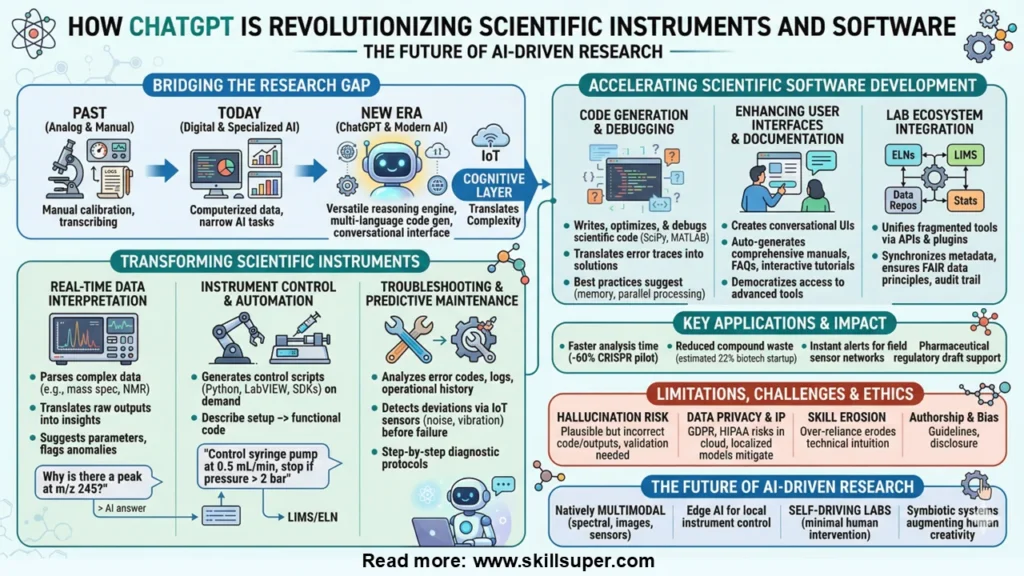

How ChatGPT Is Revolutionizing Scientific Instruments and Software: The Future of AI-Driven Research

Introduction

The landscape of modern scientific research is undergoing a profound transformation, driven by the rapid integration of artificial intelligence into laboratories, field equipment, and computational workflows. At the forefront of this revolution is ChatGPT, a large language model developed by OpenAI that has quickly evolved from a conversational assistant into a powerful cognitive layer for scientific instrumentation and software development. From automating complex data analysis pipelines to generating custom control scripts for laboratory hardware, ChatGPT is fundamentally reshaping how researchers interact with their tools.

Historically, scientific progress has been constrained by the gap between instrument capability and human interpretive capacity. Today, that gap is closing. In this comprehensive guide, we’ll explore how ChatGPT is being integrated into scientific instruments and research software, the tangible benefits it delivers, the technical and ethical challenges it presents, and what the future holds for AI-augmented research infrastructure. Whether you’re a principal investigator, lab manager, computational scientist, or instrumentation engineer, understanding this shift is no longer optional—it’s essential.

The Rise of AI in Scientific Research & Instrumentation

Scientific instrumentation has evolved dramatically over the past century. Early microscopes, spectrometers, and chromatographs required manual calibration, analog readouts, and painstaking data transcription. The digital revolution introduced computerized data acquisition, graphical user interfaces, and standardized file formats, but true autonomy remained elusive. Today, artificial intelligence is bridging the gap between raw instrument output and actionable scientific insight.

Machine learning algorithms now power everything from autonomous electron microscopes that self-adjust focus and exposure to smart PCR thermal cyclers that optimize cycling parameters based on real-time amplification curves. Yet, most of these AI implementations are narrow: trained for specific tasks, locked into proprietary ecosystems, or requiring extensive data science expertise to operate.

ChatGPT enters this landscape as a versatile, general-purpose reasoning engine. Unlike traditional models trained exclusively on numerical or image data, ChatGPT excels at natural language understanding, contextual reasoning, and multi-language code generation. This makes it uniquely suited for scientific software development, instrument documentation, protocol optimization, and real-time troubleshooting. As laboratories increasingly adopt IoT-connected devices and cloud-based analysis platforms, ChatGPT serves as a conversational interface that translates complex technical commands into intuitive, human-readable workflows.

How ChatGPT Is Transforming Scientific Instruments

Real-Time Data Interpretation & Analysis

Modern scientific instruments generate terabytes of complex, multidimensional data daily. Interpreting this data traditionally requires specialized software, statistical expertise, and hours of manual processing. When integrated with instrument APIs or data pipelines, ChatGPT acts as an intelligent intermediary that translates raw outputs into structured, contextual insights.

For example, when connected to a mass spectrometer or NMR system via middleware, ChatGPT can parse spectral data, identify peak patterns, cross-reference chemical databases, and generate preliminary reports in natural language. While it doesn’t replace domain-specific analytical algorithms (like Fourier transforms or deconvolution routines), it enhances them by providing contextual explanations, suggesting optimal analysis parameters, and flagging anomalies.

Researchers can query the system using plain language: “Why is there a secondary peak at m/z 245?” or “Compare this chromatogram with the reference standard from last week.” The model retrieves relevant literature, applies statistical reasoning, and returns actionable interpretations. This capability significantly reduces cognitive load and accelerates time-to-insight, particularly in multidisciplinary labs where team members may not share the same technical background.

Instrument Control & Automation

Laboratory automation has long been constrained by the need for specialized programming skills. Setting up a robotic liquid handler, synchronizing a temperature controller with a data logger, or optimizing a microscopy imaging sequence typically requires expertise in Python, LabVIEW, or instrument-specific SDKs. ChatGPT dramatically lowers this barrier by generating, debugging, and optimizing control scripts on demand.

Researchers can describe their experimental setup in plain English, and the model will produce functional code tailored to specific hardware interfaces. A prompt like “Write a Python script using pySerial to control a syringe pump at 0.5 mL/min, record flow rate every 10 seconds, and stop if pressure exceeds 2 bar” yields production-ready code with error handling, logging, and unit conversions.

When integrated with lab management systems like Electronic Lab Notebooks (ELNs) or Laboratory Information Management Systems (LIMS), ChatGPT can orchestrate multi-instrument workflows, schedule runs based on resource availability, and automatically adjust parameters in response to real-time feedback. This level of intelligent automation increases throughput, minimizes human error, and enhances reproducibility—a critical factor in peer-reviewed research and regulatory compliance.

Troubleshooting & Predictive Maintenance

Instrument downtime can derail experiments, waste expensive reagents, and delay publications. Traditional troubleshooting relies on manufacturer manuals, vendor support tickets, or institutional technicians. ChatGPT introduces a proactive, AI-assisted approach by analyzing error codes, system logs, and operational histories to diagnose issues and recommend solutions.

When connected to a spectrophotometer, centrifuge, or HPLC system via IoT sensors, the model can detect subtle deviations in baseline noise, motor vibration, or temperature stability before they escalate into catastrophic failures. A researcher might input: “The HPLC system shows fluctuating baseline pressure and inconsistent retention times. What are the most likely causes and how do I fix them?” ChatGPT cross-references manufacturer documentation, peer-reviewed troubleshooting guides, and community forums to generate a step-by-step diagnostic protocol.

Beyond reactive fixes, it supports predictive maintenance by identifying usage patterns that correlate with component degradation. Over time, labs can leverage these insights to schedule calibrations, replace seals, or update firmware during non-critical periods, maximizing instrument uptime and extending equipment lifespan.

ChatGPT in Scientific Software Development

Accelerating Code Generation & Debugging

Scientific software development has historically been a niche discipline, requiring researchers to balance domain expertise with programming proficiency. ChatGPT has emerged as a force multiplier in this space, enabling biologists, chemists, physicists, and environmental scientists to write, optimize, and debug code without becoming full-stack developers.

Whether implementing a custom statistical test, building a machine learning pipeline for genomic data, or visualizing 3D molecular structures in PyMOL, the model generates syntactically correct, well-commented code in seconds. More importantly, it excels at debugging. When a researcher encounters a cryptic error in a Python script using SciPy, or a MATLAB matrix dimension mismatch, pasting the traceback into the interface often yields a precise explanation and a corrected code snippet.

The model also suggests best practices for memory management, parallel processing, vectorization, and version control—critical for handling large datasets common in modern research. By automating boilerplate coding tasks, ChatGPT allows scientists to focus on experimental design, hypothesis testing, and data interpretation rather than syntax errors or dependency conflicts.

Enhancing User Interfaces & Documentation

One of the biggest barriers to scientific software adoption is usability. Many powerful computational tools feature command-line interfaces or steep learning curves that deter wet-lab researchers. ChatGPT enables the creation of conversational UIs that translate natural language requests into backend operations. Instead of navigating complex dropdown menus or memorizing CLI flags, users can type: “Normalize this RNA-seq dataset, remove batch effects, and generate a PCA plot.” The system routes the request to appropriate libraries, executes the pipeline, and returns results with interpretive summaries.

Documentation is another area where the model shines. Scientific software projects often suffer from outdated manuals, fragmented wikis, or incomplete API references. ChatGPT can automatically generate comprehensive documentation from code comments, usage logs, and test cases. It can also create interactive tutorials, FAQ sections, and troubleshooting guides tailored to different user skill levels. This democratizes access to advanced computational tools and ensures that software remains maintainable as research teams evolve.

Integrating with Existing Lab Software Ecosystems

Laboratories rarely operate with a single software platform. Instead, they rely on a fragmented ecosystem of commercial and open-source tools: ELNs, LIMS, statistical packages, instrument control suites, and institutional data repositories. ChatGPT acts as a unifying layer through API integrations and plugin architectures. By connecting to platforms like Benchling, LabArchives, or custom R/Python workflows, it can synchronize metadata, auto-fill experimental records, and ensure compliance with FAIR (Findable, Accessible, Interoperable, Reusable) data principles.

When a researcher completes an assay, the AI assistant can automatically extract key parameters, link raw data files to secure cloud storage, generate a methods section draft, and flag any missing controls or calibration steps. This seamless integration reduces administrative overhead, minimizes transcription errors, and creates a continuous audit trail essential for regulatory compliance, grant reporting, and reproducibility.

Real-World Applications & Case Studies

While large-scale commercial deployments are still emerging, numerous academic and industry labs are already piloting ChatGPT-integrated workflows with measurable results. In a 2023 pilot at a leading European research university, computational biologists used the model to automate the annotation of CRISPR-Cas9 off-target effects, reducing analysis time by 60% while maintaining 98% accuracy against manual validation.

A biotech startup in California integrated ChatGPT with their high-throughput screening platform to generate real-time hit/miss reports, enabling faster lead optimization cycles and reducing compound waste by an estimated 22%. Environmental monitoring stations have also begun leveraging AI assistants to interpret sensor networks measuring air quality, soil moisture, and water chemistry. By feeding real-time telemetry into the model via secure APIs, field researchers receive instant alerts, trend analyses, and recommended sampling adjustments without needing on-site data engineers.

In pharmaceutical R&D, regulatory affairs teams use AI assistants to draft compliance documentation, cross-reference FDA and EMA guidelines, and standardize SOPs across global sites. These early adopters consistently report faster turnaround times, reduced training costs, and improved cross-departmental collaboration. Crucially, all successful implementations maintain human-in-the-loop validation, underscoring that AI augments rather than replaces scientific judgment.

Limitations, Challenges & Ethical Considerations

Despite its promise, integrating ChatGPT into scientific instruments and software is not without significant challenges. The most critical issue is hallucination: the model can generate plausible-sounding but factually incorrect code, misinterpret instrument outputs, or cite non-existent literature. In high-stakes environments like clinical diagnostics, aerospace testing, or GMP manufacturing, unverified AI recommendations could compromise safety, validity, or regulatory compliance. Therefore, human oversight, rigorous validation protocols, and sandboxed testing environments remain non-negotiable.

Data privacy and intellectual property also pose hurdles. Many research institutions handle sensitive, proprietary, or patient-derived data. Transmitting this information to cloud-based AI models requires strict compliance with GDPR, HIPAA, FERPA, and institutional data governance policies. Some organizations are deploying localized, open-source LLMs fine-tuned on internal datasets to mitigate these risks while preserving performance.

Over-reliance on AI assistants could also erode foundational technical skills among early-career researchers. If every data analysis step or debugging task is outsourced to an AI, scientists may lose the intuition needed to recognize methodological flaws or design robust experiments. Balancing automation with pedagogical rigor is essential for sustainable scientific training.

Ethical considerations extend to authorship, reproducibility, and algorithmic bias. If ChatGPT contributes significantly to code development, data interpretation, or manuscript drafting, how should it be acknowledged? Major journals and funding agencies are already drafting guidelines for AI disclosure in scientific publications. Furthermore, models trained on historical literature may perpetuate biases in experimental design, statistical methodology, or literature citation patterns. Transparent documentation of AI usage, version control, and peer review of AI-generated outputs will be critical as these tools become mainstream.

The Future of AI-Driven Scientific Tools

The next decade will witness a paradigm shift from AI-assisted to AI-driven scientific infrastructure. Future iterations of language models will be natively multimodal, processing spectral data, microscopy images, electrophysiological traces, and sensor telemetry alongside text and code. Edge AI deployments will enable real-time instrument control without cloud dependency, crucial for field research, remote environmental monitoring, and secure facilities with strict data sovereignty requirements.

We’re already seeing prototypes of “self-driving labs” where AI plans experiments, operates robotic platforms, analyzes results, and iterates hypotheses with minimal human intervention. ChatGPT and its successors will serve as the cognitive orchestrators of these systems, translating high-level research questions into executable experimental workflows.

Standardization efforts will accelerate interoperability. Initiatives like the FAIR principles, combined with AI-ready instrument communication protocols, will create plug-and-play ecosystems where language models seamlessly orchestrate cross-platform workflows. Open-source scientific AI frameworks will democratize access, allowing smaller labs and institutions in developing regions to leverage cutting-edge tools without prohibitive licensing costs.

Collaboration between AI developers, instrument manufacturers, academic consortia, and regulatory bodies will establish certification standards, validation benchmarks, and ethical guidelines specific to research applications. The goal isn’t to replace human researchers, but to create symbiotic systems where AI handles complexity at scale while scientists focus on creativity, critical thinking, and discovery.

Conclusion

ChatGPT’s integration into scientific instruments and software marks a pivotal moment in the evolution of research methodology. By bridging the gap between complex hardware, computational workflows, and human expertise, it empowers scientists to work faster, smarter, and more collaboratively. From real-time data interpretation and automated instrument control to accelerated software development and predictive maintenance, the applications are already yielding measurable gains in productivity, reproducibility, and accessibility.

However, realizing the full potential of AI-driven research tools requires vigilance. Rigorous validation, robust data governance, ethical transparency, and continuous human oversight must accompany technological adoption. As laboratories transition from pilot projects to institutional standards, the focus will shift from “Can AI do this?” to “How do we responsibly scale AI across our research ecosystem?”

The future of scientific instrumentation is not about replacing researchers—it’s about augmenting human ingenuity with intelligent systems that handle complexity at scale. By embracing ChatGPT and next-generation AI assistants as collaborative partners, the scientific community can accelerate discovery, democratize access to advanced tools, and tackle the most pressing challenges of our time. The era of AI-augmented science is here. The question is no longer whether we should adopt it, but how thoughtfully we will integrate it into the foundation of modern research.