Beyond the Prompt: How ChatGPT is Reshaping Research Design in the Age of AI

The academic landscape is undergoing a quiet but profound transformation. Where researchers once relied solely on textbooks, peer mentorship, and trial-and-error to craft methodological blueprints, many now begin with a simple text box. ChatGPT and similar large language models (LLMs) have rapidly migrated from novelty tools to collaborative thought partners in the research process. Yet, for all the excitement surrounding AI-generated summaries, polished prose, and instant answers, one of the most consequential yet underexplored applications lies in research design.

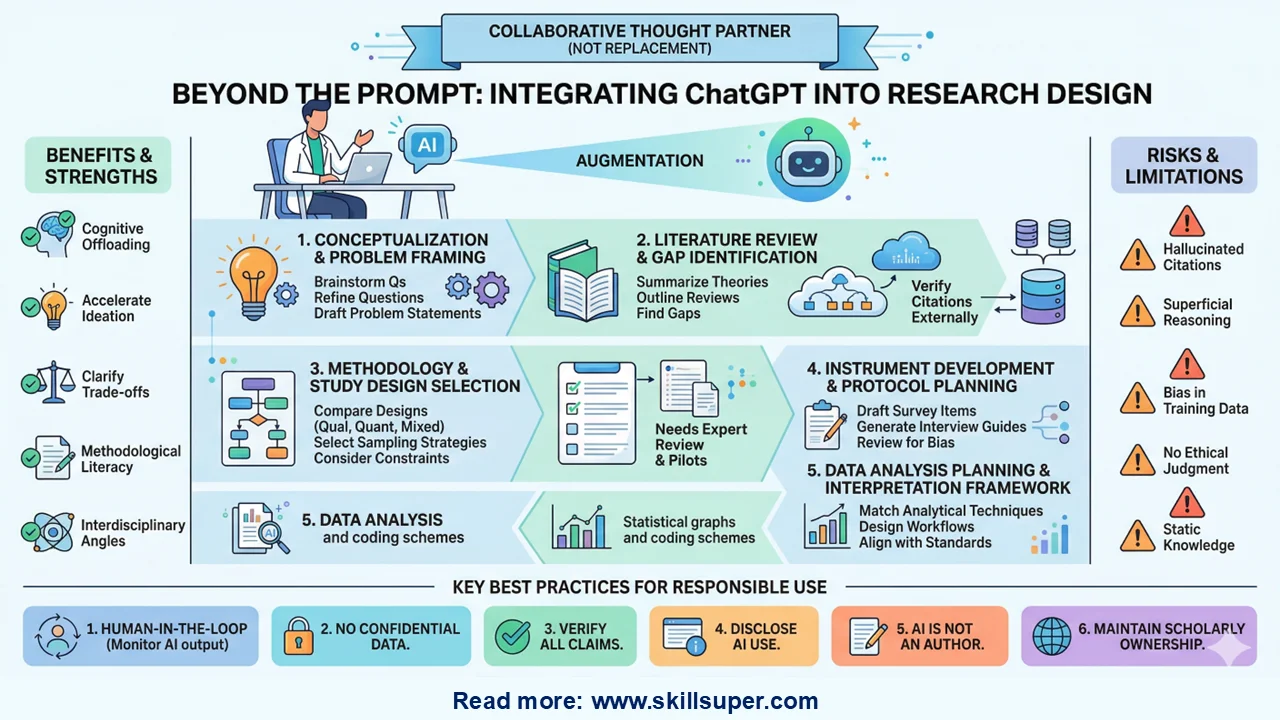

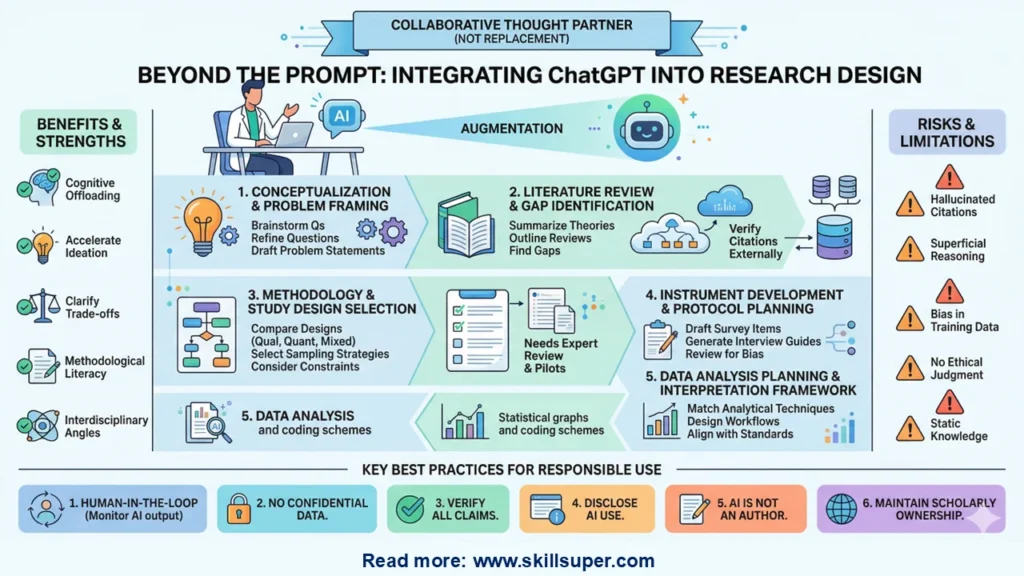

Research design is the architectural framework of any scholarly inquiry. It dictates how questions are framed, how data are gathered, how variables are controlled or interpreted, and how validity, reliability, and ethical integrity are maintained. It is inherently iterative, deeply contextual, and rigorously human. So, where does an AI language model fit into such a disciplined process? The answer is not in replacement, but in augmentation. When used strategically, ethically, and critically, ChatGPT can accelerate ideation, surface blind spots, clarify methodological trade-offs, and streamline documentation. But it also introduces new risks: hallucinated citations, superficial reasoning, and the illusion of expertise.

This article explores how ChatGPT can be responsibly integrated into each phase of research design, outlines its genuine strengths and hard limits, and provides actionable best practices for researchers who want to harness AI without compromising scholarly rigor.

What Is Research Design, and Why Does AI Matter Here?

At its core, research design is the plan that connects research questions to empirical evidence. It encompasses the philosophical stance (e.g., positivist, interpretivist, pragmatist), the methodological approach (quantitative, qualitative, mixed methods), sampling strategies, data collection instruments, analytical frameworks, and procedures for ensuring trustworthiness or statistical power. A strong design anticipates limitations, aligns methods with questions, and remains adaptable to real-world constraints.

Traditionally, mastering research design requires years of apprenticeship, coursework, peer review, and hands-on project management. ChatGPT does not replace that journey, but it can compress the learning curve. By serving as an on-demand methodological sounding board, it helps researchers iterate faster, explore alternatives they might overlook, and structure complex decisions before committing resources. The key is treating AI not as an authority, but as a highly responsive, highly fallible collaborator.

Phase 1: Conceptualization & Problem Framing

Every study begins with a question. Yet, novice researchers often struggle to transform broad interests into focused, researchable problems. ChatGPT excels at this early-stage divergence and convergence.

How it helps:

- Brainstorming research questions from vague topics or real-world observations

- Refining questions using established frameworks (e.g., PICO for health sciences, SPIDER for qualitative research, FINER for feasibility)

- Identifying interdisciplinary angles or emerging theoretical lenses

- Drafting clear problem statements and research objectives

Example prompt:

“I’m interested in remote work and employee well-being. Help me develop three specific, researchable questions using a quantitative, qualitative, and mixed-methods lens. For each, suggest a theoretical framework and note one potential limitation.”

Critical caveat: AI lacks contextual intuition about funding landscapes, institutional priorities, or field-specific debates. It may suggest questions that are theoretically elegant but empirically impractical. Always validate feasibility through pilot scoping, supervisor consultation, or preliminary literature mapping.

Phase 2: Literature Review & Gap Identification

A robust research design must be grounded in what is already known. ChatGPT can accelerate the early stages of literature engagement, though it should never be treated as a replacement for systematic database searching.

How it helps:

- Summarizing seminal theories, debates, or methodological traditions

- Generating structured outlines for literature reviews

- Highlighting contradictions or underexplored areas in existing work

- Suggesting key search terms, Boolean strings, or conceptual maps

- Explaining complex theoretical models in accessible language

Example prompt:

“Map the major theoretical perspectives on digital literacy in higher education since 2015. Identify two recurring methodological gaps and suggest how a new study could address one of them.”

Critical caveat: ChatGPT does not access live academic databases, paywalls, or preprint servers. It frequently hallucinates citations, misattributes findings, or conflates similar studies. Use it as a conceptual compass, then verify every reference through Scopus, Web of Science, PubMed, ERIC, or your institution’s library portal. Tools like Consensus, Elicit, or Semantic Scholar are better suited for AI-assisted literature discovery because they anchor responses to actual published papers.

Phase 3: Methodology & Study Design Selection

Choosing between an RCT, case study, grounded theory, or sequential mixed-methods design requires weighing epistemological alignment, resource constraints, and analytical feasibility. ChatGPT can clarify these trade-offs.

How it helps:

- Comparing methodological approaches side-by-side

- Explaining sampling strategies (e.g., purposive, stratified, snowball, cluster)

- Discussing validity, reliability, trustworthiness, and bias mitigation

- Drafting methodology section outlines aligned with journal guidelines

- Translating research questions into measurable or observable variables

Example prompt:

“I’m designing a study on teacher burnout in rural schools. Compare cross-sectional survey design vs. longitudinal qualitative interviews in terms of feasibility, generalizability, ethical considerations, and analytical complexity. Recommend one based on a 6-month timeline and limited funding.”

Critical caveat: AI does not understand your institutional review board (IRB) requirements, local ethics norms, participant recruitment realities, or disciplinary conventions. It may recommend methods that are statistically sound but logistically impossible. Always cross-reference AI suggestions with methodological textbooks, peer-reviewed design papers, and advisor feedback.

Phase 4: Instrument Development & Protocol Planning

Surveys, interview guides, observation protocols, and consent forms are the operational heart of research design. Poorly constructed instruments introduce measurement error, response bias, or ethical vulnerabilities.

How it helps:

- Drafting initial survey items aligned with specific constructs

- Generating semi-structured interview guides with probing questions

- Reviewing questions for leading language, double-barreled phrasing, or cultural bias

- Suggesting validation steps (e.g., cognitive interviewing, pilot testing, Cronbach’s alpha targets)

- Drafting IRB-ready participant information sheets and consent templates

Example prompt:

“Draft a 10-item Likert-scale survey measuring perceived academic resilience among first-generation college students. Ensure items avoid jargon, cover emotional, behavioral, and cognitive dimensions, and include 2 reverse-scored items. Note how I should validate this before full deployment.”

Critical caveat: AI-generated instruments are starting points, not finished products. They lack field-tested psychometric properties and may inadvertently embed cultural assumptions or measurement drift. All instruments must undergo expert review, cognitive pretesting, reliability/validity analysis, and ethical scrutiny before use.

Phase 5: Data Analysis Planning & Interpretation Framework

Thoughtful research design anticipates analysis before data collection begins. Deciding on statistical tests, coding schemes, or integration strategies shapes how instruments are built and samples are selected.

How it helps:

- Matching analytical techniques to research questions and data types

- Explaining assumptions of parametric vs. non-parametric tests

- Outlining qualitative coding workflows (open, axial, selective; thematic analysis)

- Designing mixed-methods integration matrices or joint displays

- Drafting analysis plans that align with reporting standards (e.g., CONSORT, COREQ, STROBE)

Example prompt:

“I’m collecting survey data on workplace flexibility (continuous) and turnover intention (binary), plus open-ended responses about decision-making autonomy. Suggest an analytical plan that integrates both, notes required assumptions, and recommends software workflows.”

Critical caveat: ChatGPT cannot run actual analyses, diagnose model misspecification, or interpret context-rich findings. It may suggest advanced techniques (e.g., structural equation modeling, multilevel modeling) without warning about sample size requirements or convergence issues. Use it to draft analysis frameworks, then validate with statisticians, methodologists, or software documentation.

Strengths & Opportunities: Why Researchers Are Adopting AI

When deployed intentionally, ChatGPT offers several tangible advantages in research design:

- Cognitive Offloading: Reduces the mental friction of structuring complex decisions, allowing researchers to focus on higher-order critical thinking.

- Methodological Literacy: Democratizes access to design knowledge, especially for early-career researchers, independent scholars, or those outside well-resourced institutions.

- Interdisciplinary Bridging: Suggests frameworks, terminology, and methods from adjacent fields that might otherwise remain siloed.

- Iterative Refinement: Enables rapid prototyping of questions, instruments, and analysis plans without committing hours to drafting.

- Clarity & Consistency: Helps align research questions, methods, and analysis into a coherent, logically flowing design document.

Limitations & Critical Caveats: What ChatGPT Cannot Do

AI is not a researcher. It is a pattern-matching engine trained on vast text corpora. Understanding its boundaries is essential to scholarly integrity:

- Hallucination: Fabricated citations, misattributed findings, or plausible-sounding but false methodological claims.

- Lack of Causal Reasoning: AI correlates concepts but does not understand causality, context, or real-world constraints.

- Training Data Bias: Recommendations may reflect historical academic trends, Western-centric paradigms, or outdated methodological norms.

- No Ethical Judgment: Cannot evaluate participant vulnerability, power dynamics, or cultural appropriateness.

- Static Knowledge: Cutoff dates and lack of real-time learning mean it misses recent methodological innovations or guideline updates.

Over-reliance on AI can lead to superficial designs that look rigorous on paper but collapse under peer review or empirical testing.

Ethical Considerations & Best Practices

Integrating ChatGPT into research design requires transparency, verification, and human accountability. Follow these evidence-based guidelines:

- Never input confidential, identifiable, or unpublished data. Treat prompts as public. Use anonymized, hypothetical scenarios.

- Verify every claim, citation, and methodological recommendation. Cross-check with primary sources, textbooks, or subject-matter experts.

- Disclose AI use appropriately. Follow journal, funder, and institutional policies. Many now require statements in acknowledgments or methodology sections.

- AI is not an author. Per COPE, ICMJE, and APA guidelines, LLMs cannot meet authorship criteria. They are tools, not intellectual contributors.

- Maintain a human-in-the-loop workflow. Use AI for drafting, brainstorming, or explaining; use humans for deciding, validating, and taking responsibility.

- Document your prompts and iterations. Keep a research log of how AI suggestions were modified, rejected, or integrated. This strengthens transparency and reproducibility.

- Train in AI literacy. Understanding prompt engineering, model limitations, and verification techniques is becoming as essential as learning statistical software.

The Future of AI-Assisted Research Design

The trajectory is clear: AI will become increasingly embedded in research workflows. We are already seeing specialized research assistants integrated with reference managers, automated protocol reviewers that flag methodological inconsistencies, and AI-ethics checkers that scan for cultural bias or privacy risks. Future iterations will likely offer tighter integration with statistical software, real-time literature mapping, and discipline-specific design templates.

Yet, the core of research design will remain irreplaceably human. Curiosity, ethical discernment, contextual awareness, and intellectual courage cannot be automated. AI will not replace researchers who think critically; it will replace researchers who fail to adapt to thinking with technology. Academic training programs must evolve to teach not just methodology, but AI-augmented methodology: how to prompt wisely, verify rigorously, integrate transparently, and maintain scholarly ownership.

Conclusion: Designing with Intelligence, Not Illusion

ChatGPT is not a shortcut to rigorous research. It is a mirror that reflects back what we ask of it, amplified by patterns in human knowledge. In research design, it can help frame sharper questions, clarify methodological trade-offs, draft stronger protocols, and anticipate analytical pathways. But it cannot replace the researcher’s responsibility to validate, contextualize, ethically ground, and ultimately own the design.

The most successful researchers in the coming decade will not be those who avoid AI, nor those who outsource their thinking to it. They will be those who treat AI as a disciplined collaborator: prompting with precision, integrating with transparency, and deciding with expertise. Research design has always been an art informed by science. Now, it is an art augmented by intelligence. Used wisely, ChatGPT doesn’t weaken the foundation of scholarly inquiry; it helps us build it faster, clearer, and more thoughtfully.