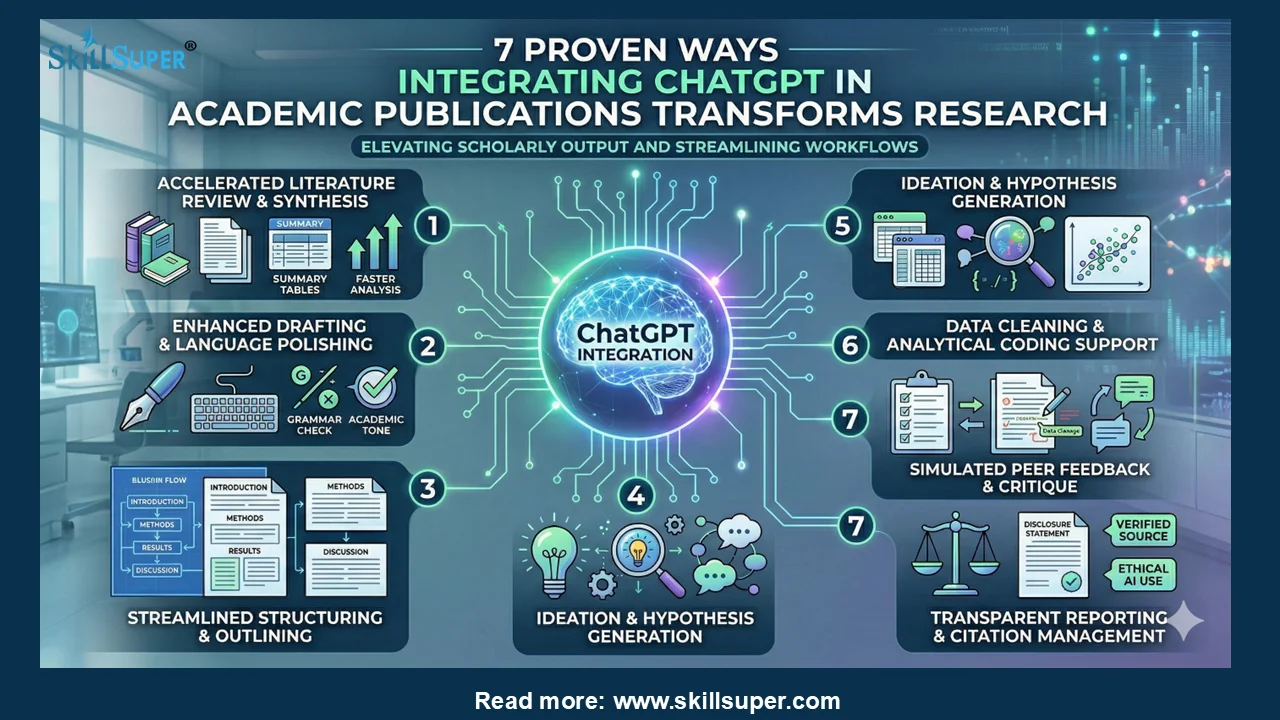

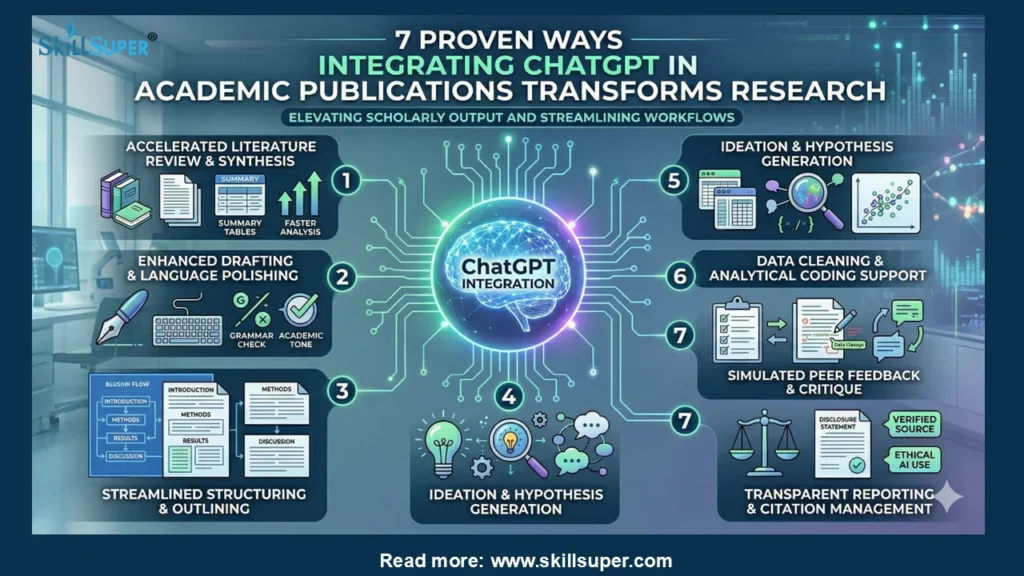

7 Proven Ways Integrating ChatGPT in Academic Publications Transforms Research

Academic publishing faces unprecedented change. Researchers now navigate tighter deadlines, rising submission volumes, and stricter peer-review standards. Artificial intelligence enters this landscape as a powerful ally. Scholars increasingly explore integrating ChatGPT in academic publications to streamline workflows and elevate scholarly output. This shift demands careful navigation. Institutions, journals, and authors must balance innovation with rigorous academic standards. The following guide examines proven applications, ethical boundaries, and actionable best practices. You will discover how to harness this technology responsibly.

The Evolution of AI in Scholarly Writing

Traditional academic writing relied heavily on manual drafting and iterative peer feedback. Word processors and citation managers introduced early efficiency gains. Today, large language models represent a quantum leap forward. These systems process vast corpora of scholarly text in seconds. They recognize discipline-specific terminology and adapt to formal academic tone. Researchers quickly recognize the potential. They experiment with AI to overcome common bottlenecks.

Journals initially reacted with hesitation. Many publishers banned AI-generated content outright. Editorial boards soon realized that complete prohibition ignores reality. Major organizations like the Committee on Publication Ethics (COPE) released nuanced guidelines. These frameworks emphasize transparency and human accountability. Authors must now declare AI assistance in their methodology or acknowledgments sections. This policy shift establishes clear boundaries. It allows integrating ChatGPT in academic publications while preserving scholarly integrity.

Accelerating Literature Synthesis and Research Design

Scholars spend countless hours mapping existing literature. They identify theoretical gaps and justify novel hypotheses. AI dramatically compresses this phase. Researchers feed relevant abstracts into carefully constructed prompts. The system extracts key methodologies, findings, and contradictions. This process generates structured literature matrices instantly.

Authors must verify every AI-generated summary against original sources. Hallucinations remain a documented limitation. The model occasionally invents citations or misattributes claims. Cross-referencing with databases like Scopus or Web of Science prevents misinformation. Once validated, the synthesized material strengthens introduction sections. It also clarifies research questions. This efficiency allows scientists to dedicate more time to experimental design and data collection.

Enhancing Drafting, Clarity, and Structural Flow

Academic manuscripts demand precise logic and formal syntax. Non-native English speakers face additional barriers. Language editing services often carry steep fees. AI provides an accessible alternative for preliminary refinement. Writers paste rough drafts and request specific improvements. They ask the system to improve coherence, eliminate redundancy, or adjust tone.

The technology excels at identifying convoluted sentence structures. It suggests active voice alternatives. It highlights ambiguous transitions between paragraphs. Authors retain full editorial control. They accept or reject every suggestion. This collaborative editing process elevates readability without compromising original meaning. Peer reviewers notice cleaner drafts. They spend less time deciphering prose and more time evaluating scientific merit. Integrating ChatGPT in academic publications thus raises overall submission quality.

Navigating Ethical Boundaries and Authorship Standards

Ethics committees monitor AI adoption closely. They question whether AI qualifies as a co-author. Current consensus rejects this classification. Authorship requires intellectual accountability and creative contribution. Algorithms lack consciousness, intent, and moral responsibility. Journals explicitly state that only human researchers may claim authorship.

Transparency remains non-negotiable. Researchers must disclose AI usage in methods or acknowledgments. Some publishers require detailed prompts and version numbers. Others mandate statements confirming human verification of all outputs. These requirements prevent deception. They maintain public trust in scientific literature. Institutions should develop clear internal policies. Faculty training programs must address responsible AI literacy. Students require guidance on citation conventions for AI-assisted work.

Mitigating Bias, Hallucination, and Data Privacy Risks

No technology operates without limitations. Large language models inherit biases from training data. They may amplify gender stereotypes or favor Western research paradigms. Scholars must critically evaluate AI suggestions against diverse perspectives. They should actively seek underrepresented sources and counter-narratives.

Data privacy presents another critical concern. Researchers upload sensitive datasets, patient information, or proprietary findings. Public AI platforms may store or train on these inputs. This practice violates confidentiality agreements and institutional review board protocols. Academics must utilize enterprise-grade, privacy-compliant AI solutions. They should anonymize all data before processing. They must never submit unpublished results to open models without institutional approval. Proactive risk management protects both researchers and participants.

Institutional Strategies and Policy Development

Universities shape how faculty and students interact with AI. Progressive administrations draft comprehensive usage frameworks. These documents outline acceptable applications, disclosure requirements, and violation consequences. They align with journal policies and funding agency mandates.

Faculty development workshops prove highly effective. They demonstrate prompt engineering techniques tailored to academic writing. They showcase discipline-specific use cases. They emphasize verification protocols and ethical reporting. Students benefit equally. Writing centers integrate AI literacy into their tutoring curricula. They teach learners how to critique machine-generated feedback. They foster critical thinking alongside technological fluency. This balanced approach prepares the next generation of scholars for evolving publication landscapes.

The Future Trajectory of AI-Assisted Scholarship

Technological advancement will accelerate rapidly. Multimodal models will soon analyze charts, statistical outputs, and experimental images alongside text. Interactive AI reviewers will simulate peer critique before submission. Publishers will adopt automated compliance checks for methodology transparency. These developments will further normalize AI as a research companion rather than a replacement.

Human expertise will grow increasingly valuable. Scholars will focus on conceptual innovation, experimental rigor, and ethical oversight. AI will handle structural optimization, language refinement, and initial literature mapping. This partnership maximizes productivity while preserving intellectual sovereignty. Researchers who adapt early will gain competitive advantages. They will publish more efficiently and communicate complex ideas more clearly. Integrating ChatGPT in academic publications will transition from experimental practice to standard scholarly workflow.

Actionable Recommendations for Authors and Reviewers

Begin by auditing your current workflow. Identify repetitive tasks that drain creative energy. Test AI tools on low-stakes drafts before applying them to major submissions. Always maintain a verification checklist. Cross-reference every citation, statistic, and claim against primary sources. Document your prompts and system versions for transparency.

Reviewers should familiarize themselves with AI-assisted manuscripts. They must evaluate methodological soundness independently of writing polish. They should report suspected undisclosed AI generation to editorial offices following journal protocols. Editors must update submission guidelines regularly. They should require standardized AI disclosure statements. They should provide clear examples of acceptable versus unacceptable usage. Consistent enforcement builds trust across the publishing ecosystem.

Conclusion

Academic publishing stands at a transformative threshold. Artificial intelligence offers unprecedented efficiency, clarity, and accessibility. Researchers who embrace responsible AI integration will navigate modern scholarly demands more effectively. The key lies in disciplined usage, rigorous verification, and unwavering ethical commitment. Journals, institutions, and authors must collaborate continuously. They must refine policies, expand training, and prioritize transparency. When implemented thoughtfully, this technology elevates scholarly communication without compromising intellectual integrity. The future of academic publishing will reward those who harness AI as a disciplined tool rather than a shortcut. Scholars who master this balance will shape the next era of global research excellence.